AI Fluency Assessment Guide

Purpose of This Guide

This guide focuses on how to design assessments that incorporate AI in ways that are pedagogically sound and clearly communicated to students. The companion guide, AI Fluency Teaching Guide, covers how to use AI across preparation, delivery, and reflection.

The goal isn't just to help you make decisions about AI in assessment. It's to embed the framework into your practice so that considering these dimensions becomes second nature.

Phase 1: Define the Cognitive Work

Before you consider AI at all, you need to be crystal clear about what students are actually learning to do. If you can't answer these questions clearly, your assessment needs redesigning regardless of AI.

Question 1: What are students learning to do?

- Technical skill execution?

- Critical analysis and evaluation?

- Creative problem-solving?

- Strategic decision-making?

- Synthesis of complex information?

Question 2: Why does this assignment exist?

- To demonstrate procedural knowledge?

- To show understanding of concepts?

- To apply theory to novel situations?

- To develop professional judgment?

Question 3: What evidence would prove they can do it?

- Observable performance?

- A tangible artefact?

- Articulation of reasoning?

- Iterative development process?

If you can't answer these clearly, STOP. Your assessment is broken regardless of AI.

A Note on Bloom's Taxonomy in Relation to AI

The bottom four levels (Remember, Understand, Apply, and Analyse) are increasingly within AI's capability. A student who only needs to recall facts, summarise concepts, complete standard problems, or identify patterns can do all of that with AI assistance in ways that are genuinely difficult to detect or prevent.

The top two levels are different in kind, not just degree. Evaluate requires students to make and justify judgements: to critique, defend a position, and reason under genuine ambiguity. Create requires students to produce something original reflecting their own thinking and choices.

If your assessments don't reach Evaluate and Create, you're testing what AI can already do.

Phase 2: Map to AI Modality

Based on Phase 1 answers, determine which AI modality makes pedagogical sense:

AUTOMATION: AI performs defined tasks

Appropriate when: You want students to demonstrate task delegation, quality control, and efficiency.

Examples: Using AI to generate first drafts, data summaries, basic code snippets.

Assessment focus: Selection judgment, output evaluation, refinement skill.

AUGMENTATION: Human-AI collaboration

Appropriate when: You want students to demonstrate iterative thinking, creative development, complex problem-solving.

Examples: Co-writing analysis, collaborative coding, research synthesis.

Assessment focus: Steering the collaboration, critical engagement, value-adding beyond AI capability.

AGENCY: Configuring independent AI

Appropriate when: You want students to demonstrate systems thinking, user experience design, ethical consideration.

Examples: Creating chatbots, designing AI tutors, building interactive experiences.

Assessment focus: Behavioural design, testing methodology, responsibility for outcomes.

NONE: AI Mitigation

Appropriate when: The cognitive work requires demonstrating personal capability independent of AI.

Examples: Timed exams, presentations, practical demonstrations, portfolios with verifiable process evidence.

Assessment focus: Authentic performance that establishes human capability.

Phase 3: Design Your Assessment Task and Rubric Weighting

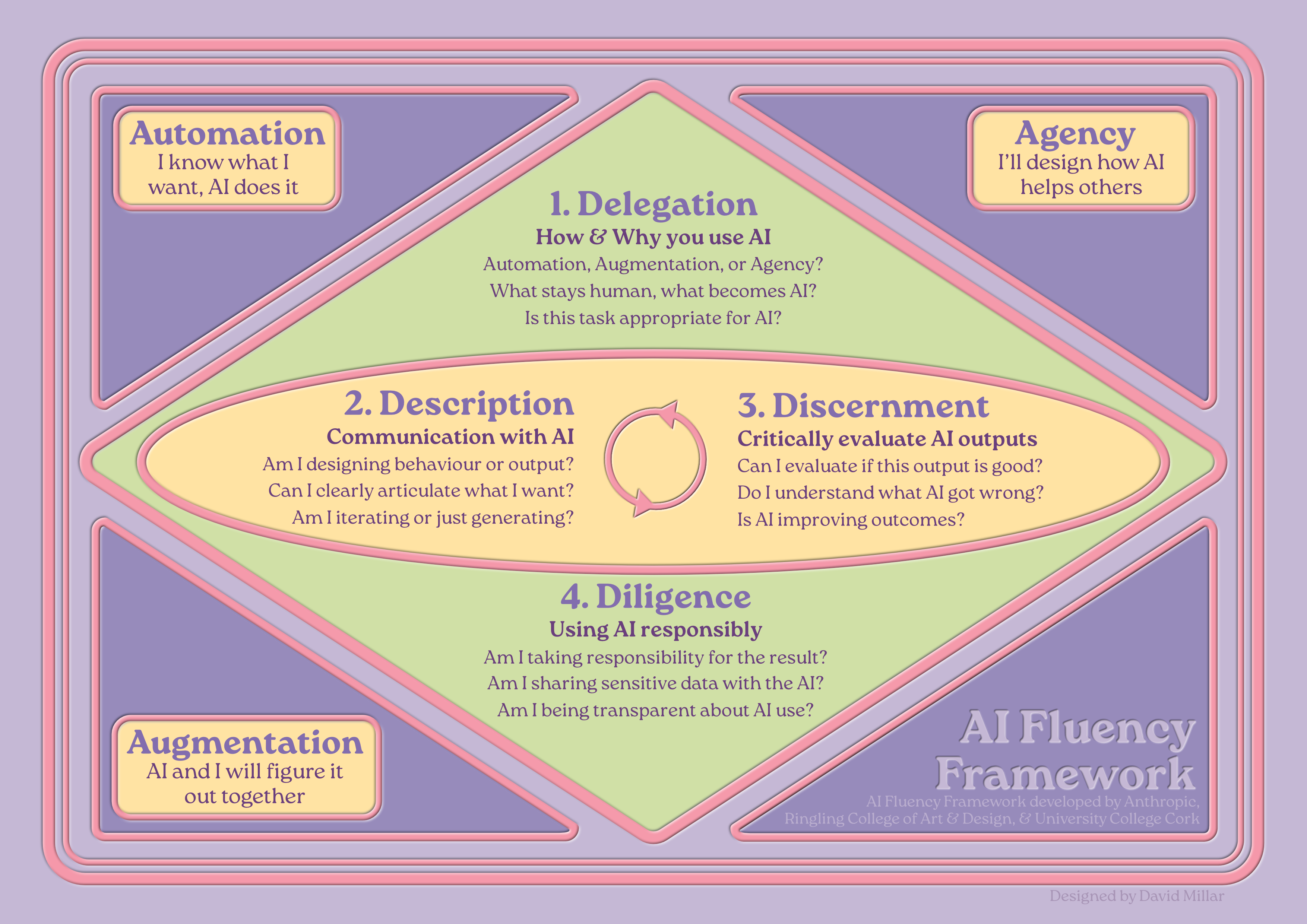

Understanding the 4 Ds

Delegation: The ability to make strategic decisions about when, how, and which AI tools to use. This includes understanding AI capabilities and limitations and making informed choices about what work should be done by AI, by humans, or collaboratively.

Description: The ability to communicate effectively with AI systems through prompts, instructions, and iterative dialogue. This includes crafting clear specifications, refining through iteration, and configuring AI behaviours.

Discernment: The ability to critically evaluate AI outputs and collaborative processes. This includes identifying errors, biases, and limitations, as well as recognising when AI adds value versus when human intervention improves outcomes.

Diligence: The ability to use AI responsibly, ethically, and transparently. This includes fact-checking, acknowledging AI use appropriately, considering ethical implications, and taking responsibility for final outputs.

Automation Assessments

Students use AI to independently perform clearly defined tasks. Focus is on efficiency, quality control, and appropriate task selection.

Typical task: Give students a defined outcome and allow them to choose and use AI tools to complete it, plus a brief reflection on tool choices and quality evaluation.

Suggested weighting: Delegation 30% | Description 20% | Discernment 40% | Diligence 10%

Example

"Use AI tools of your choice to create a competitive analysis of three companies in the renewable energy sector. Submit: (1) The analysis report, (2) A 300-word reflection explaining which tools you chose, why, and how you evaluated output quality."

Augmentation Assessments

Students collaborate iteratively with AI as a thinking partner. The process involves back-and-forth dialogue where both human and AI contribute.

Typical task: Give students a complex, open-ended problem requiring iterative development, with process documentation.

Suggested weighting: Delegation 10% | Description 30% | Discernment 30% | Diligence 30%

Example

"Collaborate with AI to develop a theoretical framework for analysing gender representation in video games. Submit: (1) Your framework paper, (2) Conversation log documenting your theoretical development process, (3) 500-word critical reflection on where AI helped you explore theoretical connections and where your disciplinary expertise shaped the analysis."

Agency Assessments

Students configure AI systems to act independently and interact with others. Focus is on designing AI behaviour, not just getting outputs.

Typical task: Design and configure an AI agent for a specific purpose, with documentation and ethical reflection.

Suggested weighting: Delegation 25% | Description 25% | Discernment 10% | Diligence 40%

Example

"Design an AI tutor that helps students learn molecular orbital theory through Socratic questioning. Submit: (1) The configured AI tutor, (2) Testing documentation showing how it responds to common misconceptions, (3) Ethical impact statement addressing risks of reinforcing errors, accessibility, and transparency about AI limitations."

AI-Mitigated Assessments

The 4 Ds framework does not apply. Use traditional disciplinary assessment criteria. However, consider: Can you verify the work is the student's own? Does the format test what you want to test? Are you mitigating AI because it's pedagogically sound, or because traditional assessment is familiar?

Quick Reference: Suggested Rubric Weightings

| Modality | Delegation | Description | Discernment | Diligence |

|---|---|---|---|---|

| Automation | 30% | 20% | 40% | 10% |

| Augmentation | 10% | 30% | 30% | 30% |

| Agency | 25% | 25% | 10% | 40% |

| AI-Mitigated | Traditional Criteria | |||

These weightings are starting points. Adjust based on your specific disciplinary context and learning outcomes.

Modular Assessment Rubric Framework

| Competency | Fail (0-39%) | Poor (40-49%) | Adequate (50-59%) | Good (60-69%) | Very Good (70-79%) | Excellent (80-100%) |

|---|---|---|---|---|---|---|

| Delegation | No evidence of considered AI tool selection; fundamental misunderstanding of appropriate AI use | Inappropriate AI tool choices; unclear rationale; ineffective task delegation | Functional AI tool selection; basic understanding of appropriate AI use; some tasks could be better allocated | Appropriate AI tool choice for most tasks; reasonable rationale for AI/human split; effective task breakdown | Strong AI tool selection with clear justification; thoughtful consideration of AI/human balance; well-structured task decomposition | Demonstrates sophisticated judgment in AI tool selection; clearly articulates rationale for automation vs. human contribution; optimal task decomposition |

| Description | No meaningful prompts provided; absence of iteration; fundamental inability to communicate effectively with AI | Vague or generic prompts; little refinement; ineffective AI communication | Basic functional prompts; some iteration; communication gets results but lacks sophistication | Clear and specific prompts; evidence of refinement; generally effective AI communication with consistent refinement | Clear, well-structured prompts showing strong iterative development; sophisticated AI communication | Prompts/instructions are precise, contextual, and iteratively refined; demonstrates sophisticated understanding of how to communicate with AI |

| Discernment | No evidence of evaluation; uncritical acceptance of all AI outputs; absence of quality control | Little critical evaluation; accepts AI outputs uncritically; minimal quality control | Basic evaluation of AI outputs; catches obvious errors; some quality control evident | Evaluates AI outputs competently; catches most errors; shows evidence of quality control | Strong critical evaluation of AI outputs; identifies most errors and limitations; shows substantial improvement through human input | Critically evaluates AI outputs; identifies errors, biases, and limitations; demonstrates clear improvement through human intervention |

| Diligence | No transparency about AI use; no fact-checking; disregards ethical implications; refuses responsibility | Mentions AI use; basic fact-checking; surface-level ethical consideration; deflects responsibility | Unclear about AI use; minimal fact-checking; ignores ethical implications; accepts responsibility | Clear about AI use; adequate fact-checking; considers main ethical issues; acknowledges responsibility | Clear and detailed transparency; systematic fact-checking; thoughtful consideration of ethical implications; takes responsibility | Transparent about AI use; thoroughly fact-checks; considers ethical implications; takes clear responsibility for outcomes |

Communicating with Students

Before presenting your assessment, help students understand that developing AI fluency builds genuine competitive advantage. Students need to see that deferring their thinking to AI makes them less valuable, not more efficient.

The Reality Students Are Entering

What employers want: People who know when to use AI and when not to, who can get better results from AI than their competitors, who can add value beyond what AI can do alone, and who take responsibility for outcomes.

What they don't want: People who can't think without AI, who can't tell good AI output from rubbish, who produce work indistinguishable from everyone else's AI-generated mediocrity, or who hide behind "the AI made a mistake."

For Automation Assessments

Your competitive advantage comes from: judgment in knowing which tasks to automate, quality control in spotting errors before they damage your reputation, efficiency in getting good results faster, and accountability in vouching for your work.

For Augmentation Assessments

Your competitive advantage comes from: iterative thinking that explores ideas you wouldn't reach alone, creative leverage letting you focus on sophisticated thinking, critical engagement knowing when AI improves your work, and authentic voice producing work that reflects your thinking.

For Agency Assessments

Your competitive advantage comes from: systems thinking understanding how AI behaviour impacts users, design sophistication configuring AI that actually helps people, ethical judgment anticipating potential harm, and responsibility for what you create.

For AI-Mitigated Assessments

Your competitive advantage comes from: genuine expertise that's yours not borrowed, authentic judgment based on your own thinking, confidence in performing without tools, and trust built on personal capability.

The bottom line: Treating AI as a shortcut makes you ordinary. Anyone can type a prompt. Genuinely skilled people know when to use AI, how to use it well, and when to rely on their own expertise.

Academic Integrity with AI

Suspected Unauthorised AI Use

Don't: Immediately accuse or penalise based on suspicion alone.

Do: Invite the student to a conversation to discuss their work. Ask them to explain their process and key decisions, probe their understanding of specific content, ask them to elaborate on points or apply concepts to new scenarios, and give them opportunity to demonstrate authentic understanding.

Important: If a student can demonstrate genuine understanding and engagement with the work, the process they used to get there is less critical than the learning achieved.

Prevention is better than detection: If you're frequently suspecting AI misuse, your assessment design may need revisiting.

Agency Assessments: When AI Agents Fail

Distinguish between technical failure (the system doesn't work as intended) and design failure (the system works but is poorly designed).

Don't penalise for: Technical glitches outside their control, single-instance failures, platform limitations they couldn't reasonably overcome.

Do assess on: Quality of configuration and instructions, evidence of iterative testing, understanding of why failures occurred, quality of documentation and ethical considerations.

Essential Assessment Brief Elements

- Which AI use is permitted and which modality you're using

- What evidence is required (process documentation, AI use statements, conversation logs)

- How to demonstrate authentic engagement with the work

- What constitutes misconduct in the context of this specific assessment

Generic statements like "appropriate use of AI" are insufficient. Specify exactly what you expect students to document and submit.

Further Support

For assistance with assessment design, contact your Learning Designer. For understanding AI risk levels, talk to Prof. Akeyo. For the interactive design tool, use the AI Fluency Assessment Design Tool.

This guide is designed as a living document. The framework will evolve as AI technologies and pedagogical practices develop.

This guide was designed and developed by David Millar as part of his role as a Digital Learning Designer for the University of Dundee, refined and evaluated with the assistance of a custom agent called Morna, running on Claude (Anthropic) and trained on the Creative Compass framework by David Calum Millar. Dr. Ross McKenzie helped to review and provide feedback. It utilises the AI Fluency Framework (Dakan, Rick and Feller, Joseph. "Framework for AI Fluency (Practical Summary Document)," Version 1.1, Ringling.edu/ai/, 2025) along with Bloom's Taxonomy (Anderson, L. W., & Krathwohl, D. R. (Eds.). (2001)) and the Learning Design Compass framework (Millar, D.C., 2026).

This guide was co-developed with Morna, a custom AI agent built on Claude and designed using the Creative Compass by David Calum Millar.