AI Fluency Assessment Design Tool

Phase 1: Define the Cognitive Work

Before you consider AI at all, you need to be crystal clear about what students are actually learning to do. If you can't answer these questions clearly, your assessment needs redesigning regardless of AI.

What are students learning to do?

Consider: Technical skill execution? Critical analysis and evaluation? Creative problem-solving? Strategic decision-making? Synthesis of complex information?

Why does this assignment exist?

Consider: To demonstrate procedural knowledge? To show understanding of concepts? To apply theory to novel situations? To develop professional judgment?

What evidence would prove they can do it?

Consider: Observable performance? A tangible artefact? Articulation of reasoning? Iterative development process?

If you can't answer these clearly, STOP. Your assessment may need redesigning regardless of AI.

Phase 2: Map to AI Modality

Based on your Phase 1 answers, determine which AI modality makes pedagogical sense for this assessment.

Explain your rationale for choosing this modality:

Phase 3: Design Your Assessment Task

Describe your assessment task

What will students actually do? What will they submit?

What evidence of process will you require?

For example: conversation logs, reflective statements, draft stages, testing documentation.

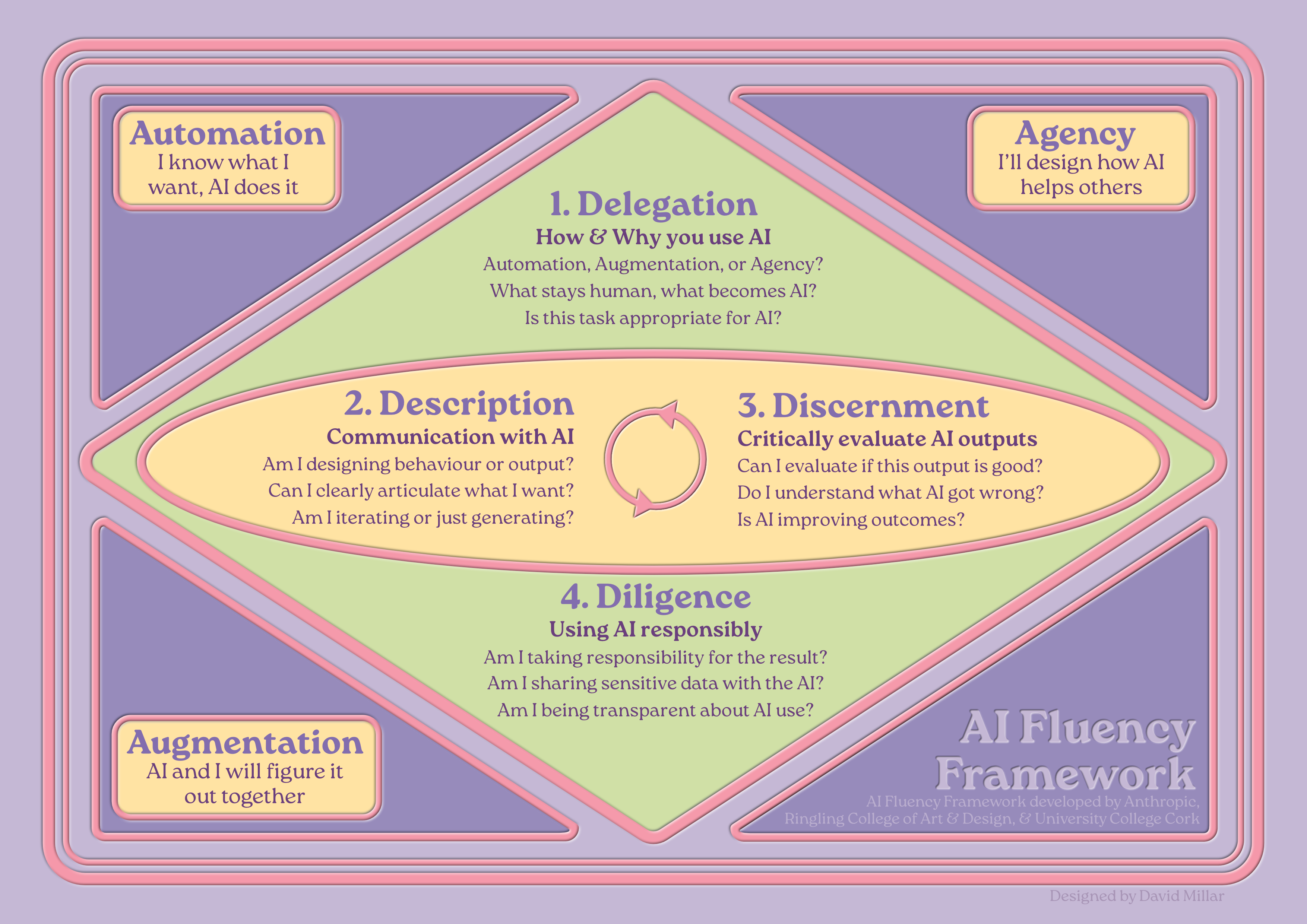

Rubric Weighting: The 4 Ds

Different modalities emphasise different competencies. Adjust the weightings below based on your chosen modality and disciplinary context.

Delegation

The ability to make strategic decisions about when, how, and which AI tools to use.

How will you assess this competency?

Description

The ability to communicate effectively with AI systems through prompts, instructions, and iterative dialogue.

How will you assess this competency?

Discernment

The ability to critically evaluate AI outputs and collaborative processes.

How will you assess this competency?

Diligence

The ability to use AI responsibly, ethically, and transparently.

How will you assess this competency?

Rubric Balance Check

Looking at your rubric weightings across the 4 Ds (values populated from above):

Suggested starting points. Automation: 30/20/40/10 | Augmentation: 10/30/30/30 | Agency: 25/25/10/40 | AI-Mitigated: Use traditional disciplinary criteria.

Assessment Brief Checklist

When writing your assessment brief, ensure you include the following essential elements:

Communicating with Students

Students need to understand that developing AI fluency builds genuine competitive advantage. Use this space to draft your student-facing communication about this assessment.

What should students understand about why this assessment is designed this way?

Consider: What skills are they developing? Why does this matter for their career? What happens if they engage superficially vs. genuinely?

What specific guidance will you give students about AI use in this assessment?

Be precise about what's permitted, what must be documented, and what constitutes misconduct.

Academic Integrity Considerations

How will you handle suspected unauthorised AI use?

Remember: Invite conversation before accusation. If a student can demonstrate genuine understanding, the process they used is less critical than the learning achieved.

If prevention is better than detection, does your assessment design minimise the incentive for misuse?

If you're frequently suspecting AI misuse, your assessment design may need revisiting. Refer back to Phase 1 and Phase 2.

This AI Fluency Assessment Design Tool was designed by David Calum Millar using the AI Fluency Framework (Dakan, Feller & Anthropic, 2025) collaboratively with a custom agent called Morna, running on Claude (Anthropic), who is trained on the Creative Compass & Learning Design Compass frameworks by David Calum Millar, then developed using Claude Code. The final design, all editorial decisions, and responsibility for the tool’s content rest with the author.